Facial recognition has come a long way and though imperfect, it continues being tweaked to make it work well. The tech works by relying on algorithms that feed it with a ton of photos. The algorithms get better as they can now put the faces into categories by race and gender which creates another problem, bias.

In the old days when facial recognition was still young, data was collected in labs by people paid by researchers and it was a tedious and costly affair and hence fewer data sets

The case is different now with the internet where more than 1.8 billion digital images are posted every single day. This is like a treasure trove for the researchers. One simply goes to a search engine, social network or a mugshot database and harvests people’s photos.

Flickr is one of those sites and IBM took advantage of its non-commercial creative commons policy which allows its users’ photos to be reused without paying license fees hence bypassing copyright issues.

Yahoo, which owns Flickr, released the data set which contained 100 million images for benchmarking purposes. IBM decided to concentrate on 1 million photos and gave the faces measurements for each including age, tone and gender.

IBM isn’t the only one doing this malpractice. There’s a lot of collections of people’s photos done by academics who fail to point out where their data sets come and it can be tipped that the data sets are trained by the billions of photos scraped off of social media platforms or the web without user’s permission.

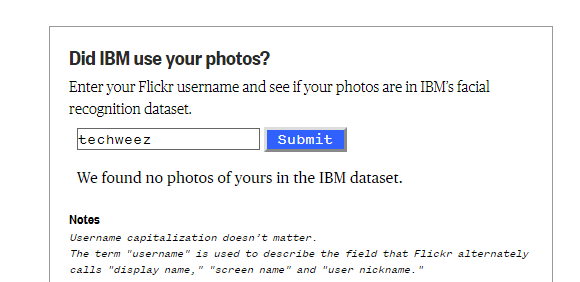

After this revelation, IBM said that Flickr users can opt out of their database. The process itself is hard as NBC News found out since IBM didn’t release the list of Flickr users making it hard to find out if your photos were included and photographers are required to email IBM to have their photos removed. However, NBC built a tool to at least help you check if your photos were included in their facial recognition dataset.

It’s a hopeless effort since the photo is just removed from IBM but not from its shared research partners or the Flickr dataset. But under GDPR, IBM can face legal actions.

This whole issue has photographers split. Some are for it if it improves safety and others against if it could be used to watch over them. Others just wanted IBM to have asked for permission prior to the tech giant using them.

Alarms raised

The whole illegal collection of people’s photos is not only raising privacy issues but also how this same info can be used to oppressively target minorities especially if used for surveillance.

IBM clarified that their Diversity in Faces dataset won’t be used for commercial purposes but experts believe that that line is a thin one.

“Facial recognition can be incredibly harmful when it’s inaccurate and incredibly oppressive the more accurate it gets.”

~ Woody Hartzog, a law and computer science professor at Northeastern University.